On 24 April 2026, Mark Zuckerberg stood in front of Meta employees and delivered a message his communications team had been workshopping for weeks. The 8,000 forthcoming layoffs, he told them, were “about capex, not AI productivity” [1]. The framing was deliberate. In the same week, Meta had revised its 2026 capital expenditure guidance upward to $125–145 billion, a number larger than the GDP of Vietnam and roughly equivalent to a tenth of the Indonesian economy [2]. The cuts, in Zuckerberg’s account, were the operating-expense rebalancing required to fund that level of infrastructure investment.

The market read this as an “AI replacing labor” story. Headlines from CNBC to Tom’s Hardware framed the layoffs as evidence that the AI-driven labor crisis had finally arrived [3][4]. Snap, which announced a 16 percent workforce cut citing AI efficiencies in the same window, was folded into the same narrative. Layoffs.fyi crossed 92,000 tech-sector cuts for 2026 by the first week of May.

That narrative is at least incomplete. What is actually happening is more important, and more interesting, for anyone trying to understand where AI value will accrue over the next decade.

The cuts are not new — the framing is

The April 2026 wave of Meta layoffs is the latest and largest in an arc that now stretches back nearly four years. Including the 8,000-person May 2026 round, Meta has eliminated approximately 33,000 positions since November 2022 [5]:

- November 2022: 11,000 cut, framed at the time as a correction to pandemic-era over-hiring [5].

- 2023: another 10,000 cut during what Zuckerberg branded the “year of efficiency”, which became a template that propagated across Big Tech [5].

- 2024: smaller rounds in January (Messenger), throughout the year (WhatsApp, Instagram, Reality Labs) — typically a few hundred at a time and described internally as “permanent efficiency” [6].

- Early 2025: a roughly 5 percent reduction (~3,600 employees) framed as a performance management exercise targeting “low performers” [7].

- April–May 2026: 8,000 announced for first wave, with company guidance acknowledging more in the second half [8].

What changed is not the existence of layoffs but the rationale Meta is willing to put on them publicly. The 2022 cuts were a macro story; the 2023 cuts were an efficiency story; the 2024 cuts were a performance story; the 2025 cuts were also a performance story. The 2026 cuts are the first round Meta has explicitly tied to capex reallocation toward AI infrastructure. That is the genuine shift in framing — and it is more honest about the underlying mechanic than any of the prior rationales.

The capex reset, decoded

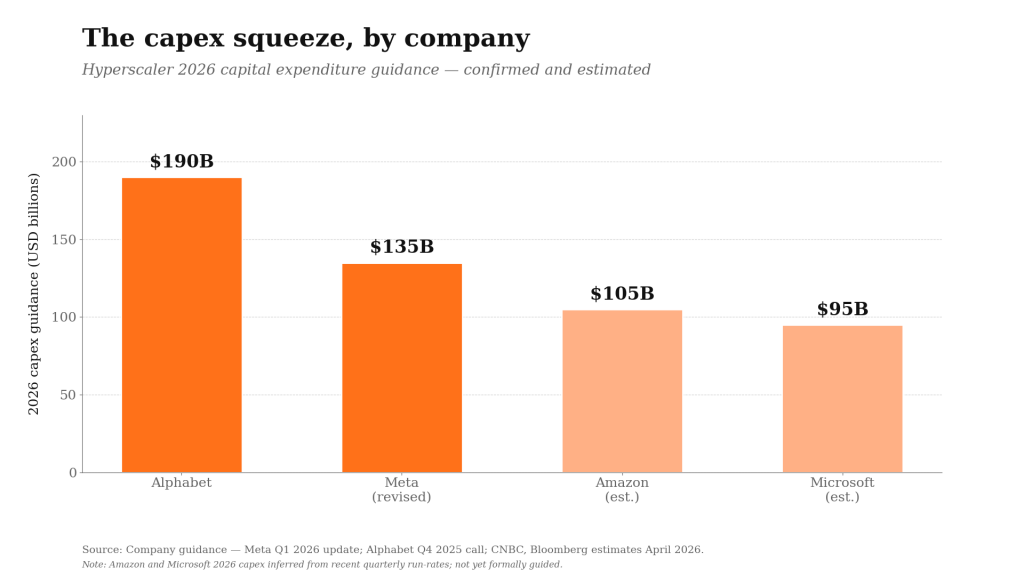

Frontier-model training and inference are the most capital-intensive computing workloads ever attempted at commercial scale. Alphabet has guided to $190 billion of 2026 capex; Microsoft and Amazon are in the same band; Meta’s revised $125–145 billion sits inside that range [9]. These numbers are not strategic choices in the conventional sense — they are the entry fee to remain a model-tier participant.

Exhibit 1. The capex squeeze, by company. Hyperscaler 2026 capital expenditure guidance — confirmed (Alphabet, Meta) and estimated (Amazon, Microsoft). Source: company guidance — Meta Q1 2026 update; Alphabet Q4 2025 call; CNBC and Bloomberg estimates, April 2026.

What Zuckerberg said in that town hall, stripped of euphemism, is that Meta’s cost structure cannot absorb both peak-cycle hiring and peak-cycle infrastructure investment simultaneously. So the operating budget gets re-cut to make room. The labor reduction is not driven by the productivity gains of the AI Meta is building. It is driven by the cash demands of building it.

This distinction matters because the two narratives imply very different futures.

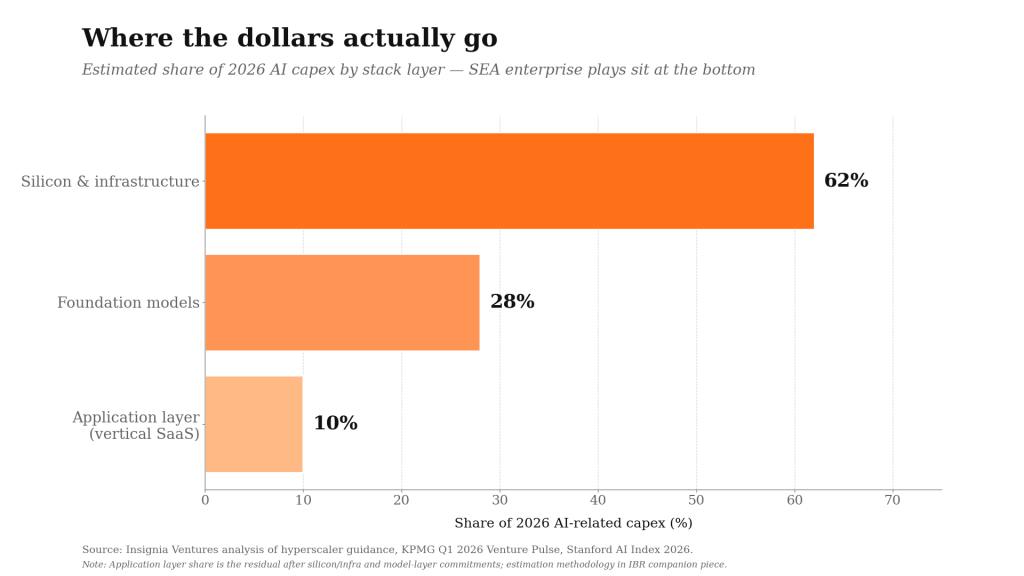

The “AI replaces labor” story implies labor cost moves to zero, productivity moves up, and the value pools follow. The “capex squeeze” story implies value concentrates first at the silicon and infrastructure layer, then potentially at the model layer if any model develops durable economic moats, and only then — if ever — at the application layer above. The second story is consistent with what is actually playing out in Big Tech P&Ls. It is also consistent with where venture returns are concentrating: Q1 2026 saw approximately 80 percent of global venture funding go to AI, with the largest cheques going to the model labs and the infrastructure providers serving them.

The implication for Southeast Asia is non-obvious.

Where SEA actually plays

If the dominant AI value pools sit at the silicon and model layers, Southeast Asia is not a participant. The region does not host a frontier-model lab, will not host a hyperscaler control plane, and, despite the region’s expanding datacentre footprint, the silicon design and fabrication economics live elsewhere. Microsoft’s $1.7 billion Indonesia commitment, Google’s $2 billion Malaysia datacentre programme, and AWS’s $9 billion Singapore expansion are real numbers, but they describe SEA as a deployment market, not a value-capture layer [10].

Exhibit 2. Where the dollars actually go. Estimated share of 2026 AI-related capex by stack layer — silicon and infrastructure absorb the majority; the application layer, where SEA enterprise plays sit, is the residual. Source: Insignia Ventures analysis of hyperscaler guidance, KPMG Q1 2026 Venture Pulse and Stanford AI Index 2026. Application-layer share is the residual after silicon/infrastructure and model-layer commitments.

The case for SEA enterprise AI, then, has to be made one layer up — in the application layer where models meet specific industries, regulated data, and labor-cost arbitrage that frontier labs cannot directly serve. This is the layer where Insignia’s enterprise AI portfolio operates, and it is the layer where the unit economics actually look defensible.

WIZ.AI is the cleanest example. The Singapore-based company describes itself as a voice AI platform pioneering the enterprise application of large language models in Southeast Asia, anchored on a “talkbot” product line that delivers what WIZ.AI characterises as a hyper-localized, omni-channel customer engagement layer. It closed a Series B in November 2025 led by SMBC Asia Rising Fund, with participation from Beacon Venture Capital (Kasikorn Bank), SMIC SG Holdings, Singtel Innov8, and Granite Asia [11].

The funding thesis was not about model capability — WIZ.AI is not building a foundation model — but about deployment at scale inside ASEAN banks. The company’s recent technical writeup, “From Pilot to Production: Deploying Voice AI in ASEAN Banks”, reads less like a product announcement and more like an operations manual for institutions that have decided voice AI is now infrastructure [12].

“Across ASEAN’s banking sector, three Voice AI use cases have clearly moved from pilot to full-scale production [— collections, inbound service, and customer engagement] … Deploying [Voice AI] in production at enterprise scale, however, exposes gaps that a contained proof-of-concept rarely reveals — particularly around integration, compliance, and operational resilience … The era of the Voice AI pilot in ASEAN banking is ending. The era of production is here.” [12]

The economics are inverted relative to a frontier lab. WIZ.AI’s gross margins improve as the model layer commodifies, because cheaper inference flows directly into expanded contribution per call. The competitive moat is integration depth: hyper-localization across Bahasa, Thai, Vietnamese, Tagalog and other ASEAN languages, data residency compliance for regional regulators, and operational workflows that match how regional banks actually run collections, customer service, and outbound campaigns. None of that is a problem the model labs are positioned to solve directly.

fileAI tells the parallel story in the unstructured-data layer. The Singapore-headquartered company — which positions itself as an “AI-native data preparation and automation platform” with what it describes as “the world’s only horizontal file processing agent” — was founded by CEO Christian Schneider and COO Clare Leighton in 2021 [13]. The platform turns unstructured and fragmented files into clean, structured data that downstream agents can act on, processing more than 200 million files annually for enterprise clients including MS&AD, Toshiba and KFC, and reporting cumulative customer savings of over 3.2 million hours and $60 million in processing cost [14].

The Series A in February 2025 — $14M led by Illuminate Financial, Antler Elevate, Insignia Ventures Partners and Heinemann — brought total funding to roughly $26 million across four rounds [15]. By December 2025, fileAI was operating in 18 countries with team members across eight, and Christian Schneider had appeared on NYSE Floor Talk to discuss the company’s positioning as the foundation for agentic AI at scale [16][17]. The Insignia Business Review founders interview from 23 December 2025 captures the strategic logic: the moat is not a better model, it is the operational compounding of having ingested every variant of every document type across every language at every quality level [17].

“The shift to agentic AI is completely transformative — like a once-in-a-generation type of shift. What cloud did for infrastructure, effort, and cost in starting and running companies, agentic AI is doing the same thing for operational lift.” [17]

Both companies illustrate the same investment thesis. The pitch is not “we have a better AI than OpenAI”. The pitch is integration depth as moat, with model commodification as tailwind.

The labor-cost question Meta did not address

The Western framing of AI-and-labor focuses on displacement: which knowledge-worker roles get automated, on what timeline, with what social consequences. The Southeast Asian framing is different and arguably more economically literal.

In SEA enterprise contexts, labor cost is genuinely the moat. A bank’s outbound collections operation runs on hundreds of agents, each processing dozens of calls per day, with unit economics that are sensitive to every percentage point of cost per call. Voice AI does not replace those agents on a one-to-one basis — it changes the cost curve such that more outreach becomes economically viable, conversion improves through better targeting, and the agents who remain are deployed against the highest-value cases. The same logic applies to document processing in insurance, claims, trade finance, and KYC.

This is not the same conversation as the Meta or Snap layoff. It is closer to a productivity conversation, where the AI tool meaningfully expands the addressable revenue per worker rather than substituting for the worker outright. The 92,000 US tech layoffs of 2026 are happening at companies whose unit economics are dominated by R&D and infrastructure spend, not by frontline operating labor. SEA enterprise customers have the inverse cost structure.

That asymmetry — different cost structures, different AI deployment logic, different value-capture mechanics — is what makes the SEA enterprise AI thesis legible. It is not a story about replacing labor; it is a story about expanding what labor can profitably reach.

What this means for valuation and exit

The exit question for SEA enterprise AI is genuinely open. The previous SEA tech cycle was dominated by consumer software comparables — superapps, marketplaces, fintech consumer plays — and the exit pathways that materialised (Grab, GoTo, SEA Ltd) were structured around growth-at-all-costs metrics that public markets eventually re-priced. The application-layer enterprise AI cohort emerging now sits closer to the global B2B SaaS template than to the prior SEA consumer cohort, but it is too early to confidently name comparables; the regional enterprise software exit set is still small enough that the relevant comparables will likely come from the next two to four years rather than from the existing historical record.

What can be said with more confidence is the directional logic. WIZ.AI’s customer concentration in regional banks, fileAI’s deployment inside multinational corporates, the broader Insignia application-layer portfolio: these are companies whose underlying unit economics, if compounded over the next three to five years, will be evaluated by acquirers and public-market investors against retention, contribution margin, and net revenue expansion — the metrics that B2B software is consistently re-priced on. Whether the 2027–2029 exit window produces strategic acquisitions, regional listings, or US listings is the open question. The valuation question, in turn, is whether the next round of SEA enterprise AI capital correctly underwrites the difference between application-layer compounding and consumer-layer scale.

The Meta capex story will continue to dominate the AI headline cycle through 2026 and beyond, simply because the dollar amounts are larger and the layoff narratives are more emotionally legible. Beneath the headlines, though, a different story is being written in Singapore, Jakarta, Manila, and Bangkok — one in which the AI value chain extends past silicon and models into the application layer, and one in which Southeast Asia, for once, is positioned to capture the right end of it.

References

- The Next Web. “Zuckerberg tells Meta employees the layoffs are about capex, not AI productivity.” 23 April 2026.

- DataCenterDynamics. “Meta raises planned capex for 2026, plans layoffs for ‘efficiency’ reasons.” 23 April 2026.

- CNBC. “20,000 job cuts at Meta, Microsoft raise concern that AI-driven labor crisis is here.” 24 April 2026.

- Tom’s Hardware. “Mark Zuckerberg says Meta is cutting 8,000 jobs to pay for AI infrastructure.” 24 April 2026.

- Fortune. “Mark Zuckerberg has cut 25,000 jobs at Meta since 2022. Here’s what that says about his leadership.” 27 March 2026.

- Fortune. “Meta’s quest for ‘efficiency’ sparks new wave of layoffs across departments.” 17 October 2024.

- Quartz. “Mark Zuckerberg is starting Meta’s ‘intense’ year by laying off low-performing workers.” January 2025.

- The Next Web. “Meta to cut 8,000 jobs on 20 May with more layoffs planned for second half of 2026.” April 2026.

- CNN Business. “Meta to cut 10% of staff as it pours billions into AI.” 23 April 2026.

- ARC Group. “Harnessing ASEAN’s Data Center Boom.” 2026.

- PR Newswire. “Voice Agent Pioneer WIZ.AI Raises Tens of Millions in Series B to Scale Enterprise AGI Solution Globally.” 5 November 2025.

- WIZ.AI. “From Pilot to Production: Deploying Voice AI in ASEAN Banks.” 2026.

- fileAI. “AI-Native Data Preparation & Automation Platform” (homepage and About). Accessed May 2026.

- fileAI. Product and customer scale data (200M+ files annually; 3.2M hours and $60M in customer cost savings). Company-published as of May 2026.

- TNGlobal. “Singapore’s fileAI raises $14M in Series A funding led by Illuminate Financial, Antler Elevate, Insignia, Heinemann and others.” 3 February 2025.

- NYSE TV (Floor Talk). “Christian Schneider of fileAI Joins Floor Talk.” Season 3, 2025.

- Insignia Business Review. “fileAI Founders CEO Christian Schneider and COO Clare Leighton on Building a Global AI-Powered Automation Engine.” 23 December 2025.

Paulo Joquiño is a writer and content producer for tech companies, and co-author of the book Navigating ASEANnovation. He is currently Editor of Insignia Business Review, the official publication of Insignia Ventures Partners, and senior content strategist for the venture capital firm, where he started right after graduation. As a university student, he took up multiple work opportunities in content and marketing for startups in Asia. These included interning as an associate at G3 Partners, a Seoul-based marketing agency for tech startups, running tech community engagements at coworking space and business community, ASPACE Philippines, and interning at workspace marketplace FlySpaces. He graduated with a BS Management Engineering at Ateneo de Manila University in 2019.