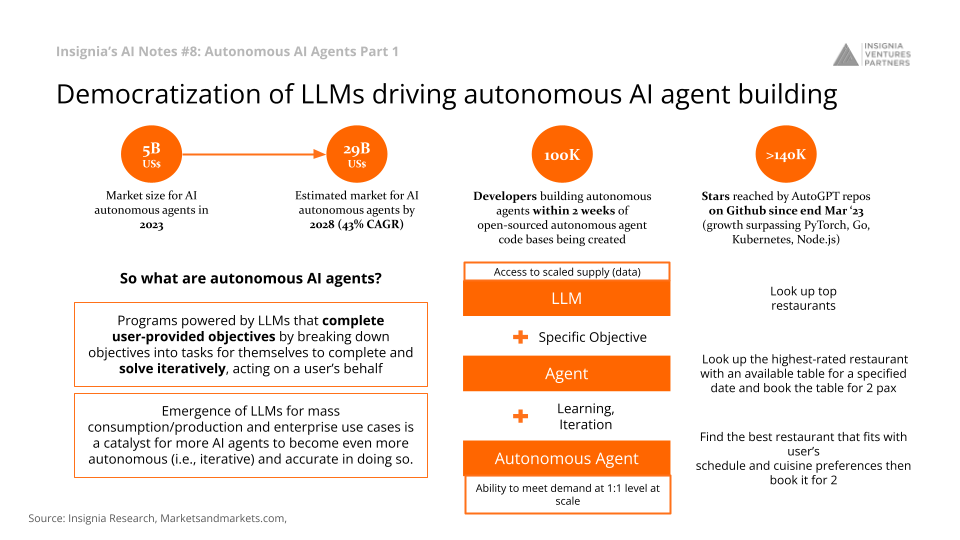

The democratization of Large Language Models (LLMs) has been a catalyst for the growth of autonomous AI agents, revolutionizing the field with new capabilities and potentials, signifying a shift towards a future with Artificial General Intelligence (AGI). The market for autonomous AI agents is estimated to grow from US$5B in 2023 to US$29B by 2028, at a CAGR of 43%.

But what exactly are autonomous AI agents? What are its implications for LLM and AI capabilities? And what are the current limitations that need to be overcome in order to unlock more commercial use cases? This week’s AI Notes attempts to tackle these questions.

Check out Last Week’s AI Notes: AI In Healthcare

Democratization of LLMs driving autonomous AI agent building

Democratization of LLMs driving autonomous AI agent building

Open-Source Movement

Open-sourced codebases for autonomous agents have set the stage for rapid development. Within the first two weeks of their creation, almost 100,000 developers joined the movement, accelerating the growth of Github repost like AutoGPT faster than PyTorch, Kubernetes, Bitcoin, and Django. The launch of AutoGPT in March 2023 marked a historical moment, with it becoming one of the fastest-growing GitHub repositories ever. Its quick ascension to 140,000 stars and the emergence of related projects, such as BabyAGI, have illuminated the potential of existing LLM APIs and reasoning prompts to do long-running, iterative work.

Autonomous AI Agents vs LLMs

Autonomous agents, empowered by LLMs, act on behalf of users to break down objectives into tasks, solving them iteratively until the goal is achieved.

With LLM, we can look up the top restaurants in each city. With an agent, we can look up the highest-rated restaurant with an available table for a specified date and book the table for two. With an autonomous agent, we can ask it to find the best restaurant that fits with our schedule and cuisine preferences and then book it for two.

Emergence of LLMs for mass consumption/production and enterprise use cases is a catalyst for more autonomous AI agents to meet various use cases, bridging familiarity, communication/UX, and data gaps.

How do Autonomous AI Agents Get the Job Done?

How do autonomous AI agents get the job done?

Functioning of Autonomous AI Agents

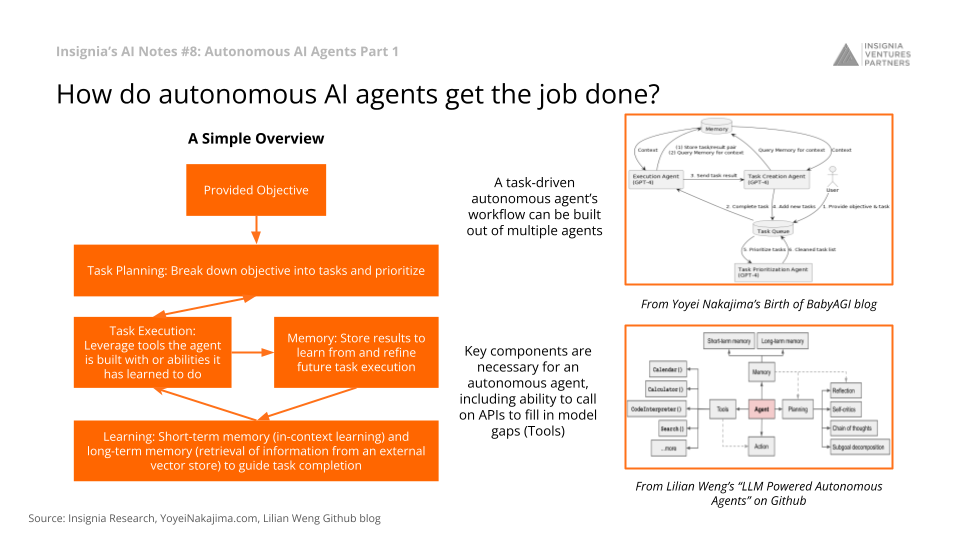

Autonomous AI agents break down complex task into subtasks (‘thinking’ step by step). They use both short-term memory (in-context learning) and long-term memory (retrieval of information from an external vector store) between each step to guide their next actions. They are also capable of reflecting on their actions and refine their execution subsequently.

Multiple agents can be woven together in an autonomous agent task-driven workflow, with the ability to call on APIs to fill in model gaps.

See framework examples from Lilian Weng on Github or Yoyei Nakajima.

Autonomous AI Agents Still Early to the Field but with Training, Have Massive Potential

Agents still early to the field but with training, have massive potential

Implications of Autonomous AI Agents

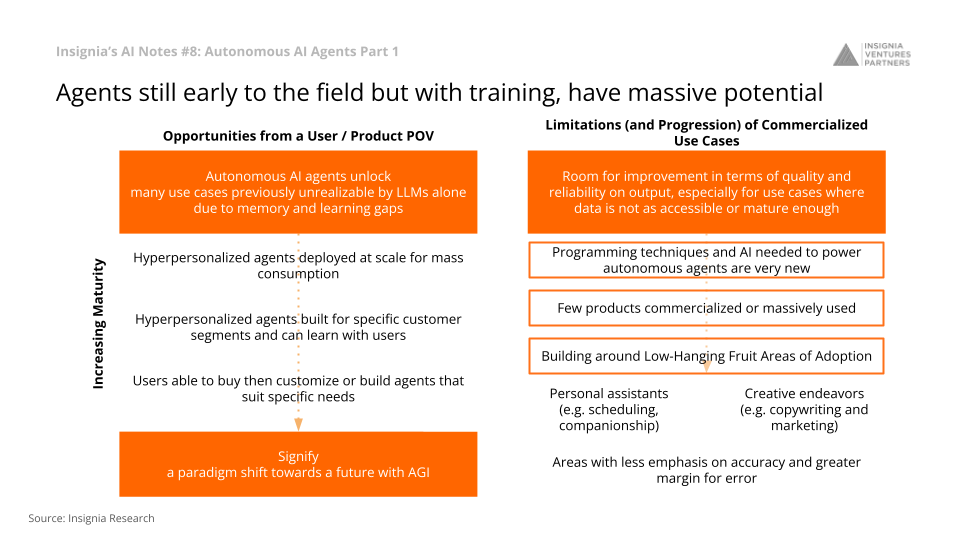

The burgeoning field of autonomous agents has fired our imaginations with previously unrealizable possibilities, signaling a paradigm shift towards AGI (Artificial General Intelligence).

Because of agents’ abilities to execute extremely complex tasks and problem solve, this unlocks many use cases previously unrealizable by LLMs alone. For example, autonomous AI agents could accelerate the move from one-to-many to one-to-one tuition in edtech. Tutor agents can continuously adapt and support the child’s learning experience and deliver an extremely personalized course based on a child’s needs.

Limitations and Immediate Applications of Autonomous AI Agents

The development of autonomous AI agents is still nascent with only few commercialized products. This is primarily because programming techniques and AI needed to power autonomous agents are very new. Hence, the market will take off first from personal assistants (e.g. scheduling, companionship) and creative endeavors (e.g. copywriting and marketing, or areas where there is less emphasis on accuracy and greater margin for error) before being adopted at scale by enterprises.

—-

The democratization of autonomous AI agents is more than a trend; it is a revolution. As LLMs are giving way to agents capable of independent and intricate problem-solving, we stand on the cusp of a new era. Although there are limitations, the current growth and interest signal a game-changing future, one where technology not only mimics human ability but becomes an extension of our capacity to think, learn, and create.

Follow our LinkedIn page for the latest updates on our weekly AI insights and other insights in Southeast Asia’s innovation landscape.

Author’s Note: This article was written with insights and research from our investment team. Reach out to them if you’re building in this space out of Southeast Asia and would like a deeper conversation.

Paulo Joquiño is a writer and content producer for tech companies, and co-author of the book Navigating ASEANnovation. He is currently Editor of Insignia Business Review, the official publication of Insignia Ventures Partners, and senior content strategist for the venture capital firm, where he started right after graduation. As a university student, he took up multiple work opportunities in content and marketing for startups in Asia. These included interning as an associate at G3 Partners, a Seoul-based marketing agency for tech startups, running tech community engagements at coworking space and business community, ASPACE Philippines, and interning at workspace marketplace FlySpaces. He graduated with a BS Management Engineering at Ateneo de Manila University in 2019.