Most coverage of Jensen Huang’s “five-layer cake” frames it as a technical taxonomy of the global AI stack: energy, chips, infrastructure, models, applications. Read from Southeast Asia, the framework is more useful as a triage tool. It draws a clean line between the layers the region will largely import and the layers where it can build category leaders.

On the mainstage at the World Economic Forum in Davos in early 2026, alongside BlackRock CEO Larry Fink, NVIDIA CEO Jensen Huang reframed the AI build-out as “a five-layer cake” (energy, chips, infrastructure, models, and applications), describing it as “the largest infrastructure buildout in human history” [1]. The framework has since become a common reference point for investors trying to make sense of where AI value will accrue. For most global readers, that is enough. The cake is a map of the full stack and where to allocate.

For Southeast Asia, the more useful argument sits in a single observation: the bottom three layers (energy, chips, AI compute infrastructure) will be largely decided in the United States, China, the Middle East, and Europe, even when the data centers themselves end up sited in Johor or Jakarta. The top two layers (models and applications) are where local language, regulatory nuance, and operational realities compound into defensibility, and where Southeast Asian founders are best positioned to build at scale. Defensibility on this map is not about excelling in a single layer but about positioning across at least two adjacent layers, which is exactly the kind of judgment a stack-level lens makes possible.

Layer 1: Energy

At the very bottom of Huang’s AI cake sits energy. Intelligence generated in real time requires power generated in real time, and the sheer computational demands of training and running advanced AI models have made electricity the binding constraint on how much intelligence the system can produce.

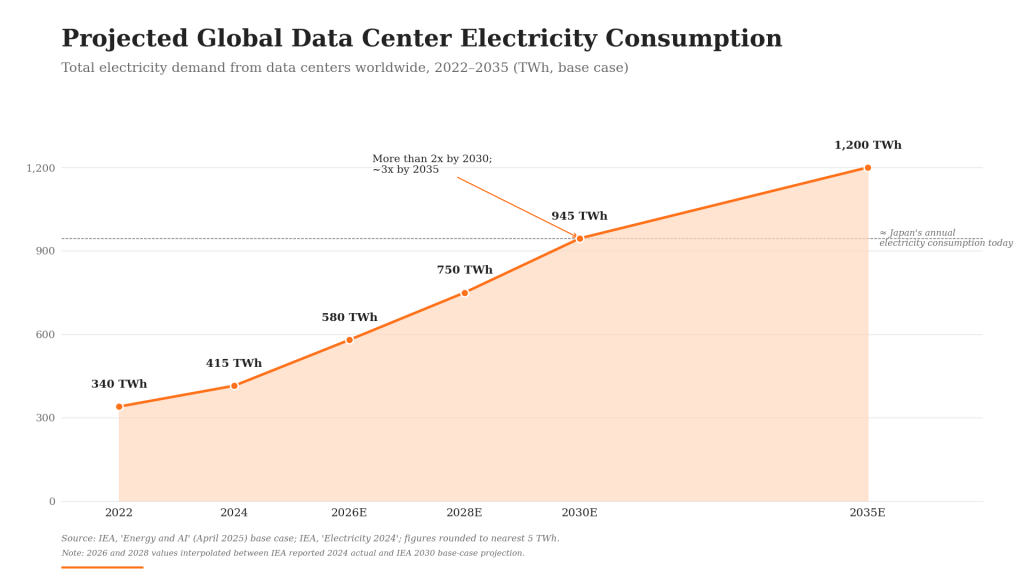

Data center electricity consumption already reached an estimated 415 terawatt-hours globally in 2024 (roughly 1.5% of total world consumption), and the International Energy Agency projects it more than doubles to about 945 TWh by 2030, approaching Japan’s total annual electricity demand [2]. Accelerated servers, the AI-specific workload class, are projected to grow at roughly 30% annually in the IEA’s base case.

Title: Projected Global Data Center Electricity Consumption Subtitle: Forecasted increase in electricity demand from data centers, driven by AI workloads Source: International Energy Agency, Energy and AI (April 2025); IEA Electricity 2024

VC perspective: Energy is the layer most generalist investors still under-price, and Southeast Asia is unlikely to host the deepest pools of capital flowing into hyperscale energy infrastructure. The region will be a buyer of compute capacity, not the primary financier of it. For SEA-focused VCs, the more relevant adjacencies are upstream of the data center fence line: workload-aware energy software, distributed power for industrial AI, and the sustainability-reporting tools that will increasingly govern how AI compute is procured by SEA banks and insurers.

Layer 2: Chips and Systems

Above energy sit chips and systems, the specialized silicon, primarily GPUs, that converts electricity into useful computation at scale. This layer is NVIDIA’s historical stronghold and remains critical for the entire AI ecosystem.

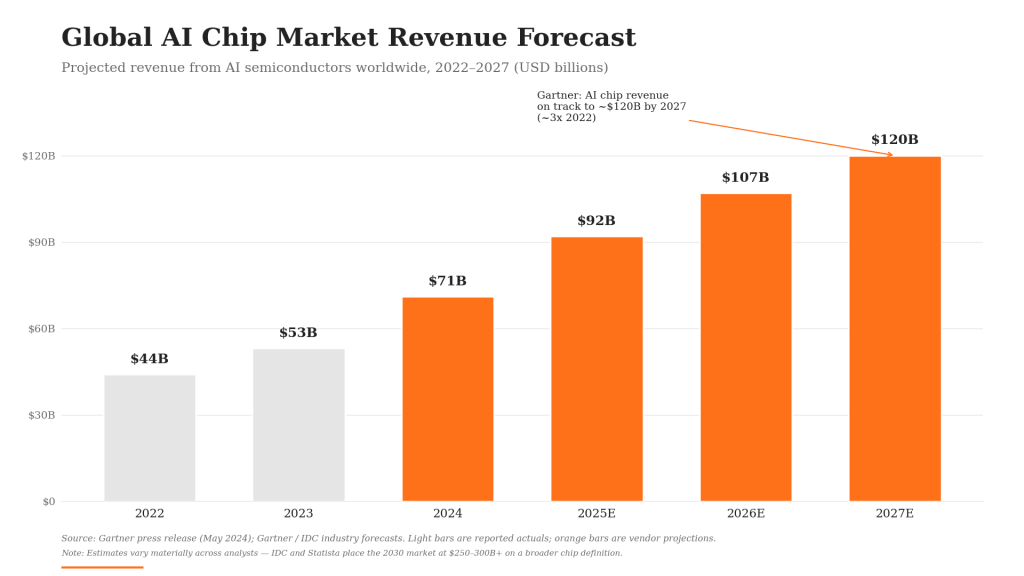

Modern AI, particularly deep learning, relies on the parallel processing GPUs excel at. NVIDIA’s CUDA platform has been instrumental in establishing its GPUs as the de facto standard for AI development [3]. Continuous innovation in chip architecture (from Hopper through Blackwell, announced in March 2024 with claims of up to 25x lower cost and energy consumption than its predecessor for trillion-parameter inference) keeps the computational ceiling rising [4]. Beyond individual chips, this layer also includes the integrated systems that house and connect those processors, including NVIDIA’s DGX systems, which integrate GPUs, high-speed interconnects, and software into purpose-built AI factories [5].

Title: Global AI Chip Market Revenue Forecast Subtitle: Projected growth in revenue for AI-specific semiconductors, 2022–2027 Source: Gartner press release (May 2024); Gartner / IDC industry forecasts

VC perspective: Chip design at the frontier is a US, Taiwan, and increasingly China story; Southeast Asia’s role here is in advanced packaging, test, and supply-chain adjacencies rather than design. For a regionally-focused fund, the sharper question is downstream: which SEA companies will buy the most accelerator capacity, and which will turn that capacity into recurring revenue fastest? That question pushes the analysis up into the model and application layers.

Layer 3: Infrastructure and AI Factories

Building on chips and systems, the third layer is infrastructure: the land, power delivery, networking, cooling, and orchestration that turn tens of thousands of processors into a single working machine. In commercial terms, this is the layer of “AI factories” and cloud-based AI services: hyperscale data centers from AWS, Azure, and Google Cloud, along with NVIDIA’s own DGX Cloud, that provide on-demand access to AI compute [6].

Southeast Asia is, in fact, where a meaningful share of that physical buildout is now happening, but as a host, not as a financier. The shape of the buildout looks different in every market [7][8]:

- Malaysia (Johor): ~6,500 MW of operational and planned capacity makes Johor the eighth-largest data center cluster in Asia Pacific, anchored by AirTrunk’s hyperscale campuses, YTL Power’s NVIDIA-backed AI park, and Microsoft’s land acquisitions and planned cloud region. The Johor-Singapore Special Economic Zone, formally established in January 2025, codified the cross-border setup with a 5% corporate tax rate for up to 15 years on qualifying activities, explicitly including AI and quantum-computing supply chains. Hyperscaler commitments include Google at $2 billion and Microsoft at $2.2 billion.

- Singapore: Despite tight power and land constraints, the city-state remains the regional anchor for cloud spend. AWS has committed S$12 billion through 2028; Google’s cumulative investment runs to roughly $5 billion.

- Indonesia: Capacity is projected to grow from roughly 300 MW today to 1,500 MW by 2028, with Microsoft’s $1.7 billion commitment among the largest single inflows.

- Thailand: Capacity is projected to reach 642 MW by 2028, a ~57% CAGR from a small base, with hyperscalers and regional players both expanding.

- Vietnam: As much as 8 GW of dedicated gas-fired power is being prepared for AI data center demand by 2030, governed by the country’s first standalone AI Law, enacted in December 2025 and effective from March 2026.

What this region is hosting, in other words, is global AI factory capacity, not regional AI factory companies. The capital is sovereign, hyperscaler, and infrastructure-fund; the operating model is industrial, not venture-scale. For Southeast Asian businesses and AI-native startups, the practical effect is that AI compute is increasingly available locally, with lower latency, better data residency optionality, and improving unit economics over the next several years.

VC perspective: Like Layer 2, Layer 3 in Southeast Asia is mostly an import, even when the building sits in Johor or Jakarta or Ho Chi Minh City. The value pool inside Huang’s “AI factory” definition will be captured by hyperscalers, sovereigns, and the small set of infrastructure operators close to them, not by venture-backed challengers. The relevant question for an SEA-focused VC is not how to invest in the data center; it is how to position the application-layer companies that buy capacity from it. The region’s role as the host of the buildout, however, is itself a meaningful tailwind for everything stacked above. Cheaper, closer compute is what makes the next wave of Southeast Asian models and applications economically viable in the first place.

Layer 4: AI Models

The fourth layer, AI models, represents the intelligence itself: large language models, vision models, and other foundational systems trained on vast datasets. Models such as OpenAI’s GPT series, Google’s Gemini, and Meta’s Llama dominate the headlines as the “brains” of the AI revolution. The race to build more capable, efficient, and specialized models is a central theme of current AI innovation, and it drives demand back down the stack into infrastructure, chips, and ultimately energy.

For Southeast Asia, the realistic ambition is not to compete with frontier labs on capability, but to build models that better fit the region’s languages, regulatory regimes, and enterprise workflows. WIZ.AI is the clearest portfolio expression of that thesis. The company released the region’s first 7B-parameter foundation model trained on 10 billion Bahasa Indonesia tokens, and now operates conversational AI in 17+ languages and dialects across ASEAN and Latin America for more than 300 enterprises.

In a September 2025 conversation on On Call with Insignia, WIZ.AI Senior Director of AI Strategy and Partnerships Robin Li framed the difference between WIZ.AI and the wave of “wrapper” startups bluntly: “We are not right now starting from zero. We started already with fundamentals about the solution and also the business case. Right now, when the large model technology is here, it helps us to attend more use cases and to us that is market expansion. But I think to most of the competitors, the new company startups, they are right now just working on how to adapt technology into new use cases. That’s a total difference. We have a massive head start.” [9]

The same conversation surfaced a structural observation about AI demand in the region: “In China right now there’s a top-down trend that all of the leaders are trying to push the new technology into their business… But in Asia right now, companies normally, the industry people and customers, they’re waiting for the new trends from the AI technology startup companies.” That waiting demand (qualified buyers without a fitted product) is the gap a regionally-tuned model layer is uniquely positioned to close.

VC perspective: Layer 4 in Southeast Asia rewards companies with proprietary data loops, multi-year head-starts in deployment, and a fluency in local regulation that frontier labs cannot replicate by translating an English model. Insignia Ventures has previously argued that in the age of AI, moats matter more than ever, since AI lowers the cost of building but raises the bar on defending what is built [10].

Layer 5: AI Applications

Sitting at the top of the cake are the applications. This is where models are integrated into products and services that customers actually use: generative AI tools for content, AI-powered drug discovery, agentic workflow automation, intelligent customer service, predictive analytics for finance. Success at this layer is less about access to frontier models (increasingly a commodity) and more about workflow design, distribution, and feedback loops. The companies pulling away are AI-native: rebuilding processes from first principles rather than bolting an LLM onto a legacy SaaS product.

For Southeast Asia, this is the most strategically important layer in the cake. Insignia Ventures has consistently argued that the application layer is the region’s natural launchpad: language, cultural, and regulatory nuance create defensible local advantages that pure-play global incumbents cannot easily replicate, and that the evolved Southeast Asia VC playbook should concentrate capital around that edge [11][12].

fileAI, founded by CEO Christian Schneider and COO Clare Leighton in Singapore, illustrates the model. The company has built a horizontal AI-powered automation platform that structures, verifies, and enriches data for back-office workflows, a category that depends as much on trust and compliance as on raw model accuracy. In a December 2025 interview with Insignia and the New York Stock Exchange, Leighton was direct about how that priority emerged: “We have built a lot of our AI components and models ourselves. When we started, we thought things like accuracy with proprietary models and new AI components for functionality were going to be the go-to-market driver. But really, in this part of the world and what we’ve learned over the years is that it’s about trust, compliance, and change management.” [13]

In the same interview, Leighton offered the framing that best captures why this layer is being repriced now: “We see the shift to agentic AI being completely transformative, like a once-in-a-generation type of shift… I think of it as likening to what cloud did for infrastructure, effort, and cost in starting and running companies, not just startups, but also enterprise. Agentic AI is doing the same thing for operational lift.” That comparison, agentic AI as the cloud-equivalent unlock for back-office operations, is the bull case for the entire application layer.

Diaflow, which raised its seed round led by Insignia Ventures in February 2026, plays a complementary part of Layer 5: an AI-native workflow platform that lets non-technical users construct and deploy AI agents without writing code [14]. Founder and CEO Jonathan Viet Pham described on On Call with Insignia in March 2026 how years of failed enterprise-automation projects shaped the company’s design choice: “We built most of the products as scalable products for enterprise and we realized that it didn’t save them time, it didn’t save them money. They invested a lot of money, but they followed the old mindset which is still buttons and clicks. Nobody wanted to change their behavior to another software, right?” Diaflow’s response was to abandon button-and-click as the interface entirely. “When OpenAI introduced the new interface that people could chat with, we thought, ‘Hey, why don’t we build software that is fully automated? It doesn’t rely on traditional methods of data input, but it’s a brand new one.’ So that’s the reason why we started Diaflow.” [15]

The agent-to-agent rails inside the utility layer

The application layer also surfaces a quieter but increasingly load-bearing dynamic: as workflows shift from human-driven to agent-driven, the legacy financial rails underneath them are being repriced. Card networks, correspondent-banking chains, and human-in-the-loop reconciliation were designed for human throughput. Agentic workflows operating at machine speed expose every basis point of friction (interchange, FX spread, T+2 settlement, manual KYC) as a tax on autonomy. The rails that survive that scrutiny are programmable, low-cost, near-real-time, and natively addressable by software: stablecoin settlement, programmable treasury, and orchestrated cross-border endpoints.

StraitsX is one expression of that shift, operating BIN-sponsored stablecoin infrastructure for cross-border payments, exactly the rail an AI agent reconciling regional invoices or settling a marketplace transaction would prefer over a multi-day correspondent chain. Finmo is another, on the treasury and CFO-intelligence side: programmable cash management, FX, and reconciliation built for finance teams that increasingly operate alongside agentic systems rather than on top of static spreadsheets. Neither company is Huang’s Layer 3 (they are not AI factories), but their value compounds with every additional agent and every additional automated workflow plugged into the application layer above them.

VC perspective: Layer 5 is arguably the most diverse and accessible part of the cake for venture investment, and the layer where Southeast Asian founders are best positioned to build category leaders. The companies pulling ahead share three traits: they are AI-native rather than AI-bolted-on; they own the data and workflow loops that compound with use; and they treat compliance and change management as a feature, not a friction. Those are not generic AI virtues. They are the specific qualities that make application-layer companies defensible when frontier model access becomes a commodity.

What the Cake Means for Southeast Asia

Huang’s five-layer cake is useful in Southeast Asia precisely because it forces a discipline most regional AI investing still lacks: thinking about the full stack at once, not one slice at a time. The honest reading is that the region will be a host and a buyer of layers one through three, not a primary financier of them. The serious work, and the serious returns, sit in models and applications, where the next generation of regional category leaders is already being built.

References

[1] NVIDIA. (2026, January 22). ‘Largest Infrastructure Buildout in Human History’: Jensen Huang on AI’s ‘Five-Layer Cake’ at Davos. NVIDIA Blog. https://blogs.nvidia.com/blog/davos-wef-blackrock-ceo-larry-fink-jensen-huang/

[2] International Energy Agency. (2025, April). Energy and AI. https://www.iea.org/reports/energy-and-ai

[3] NVIDIA. CUDA Toolkit. https://developer.nvidia.com/cuda-toolkit

[4] NVIDIA. (2024, March 18). NVIDIA Blackwell Platform Arrives to Power a New Era of Computing [Press release]. https://nvidianews.nvidia.com/news/nvidia-blackwell-platform-arrives-to-power-a-new-era-of-computing

[5] NVIDIA. NVIDIA DGX Platform. https://www.nvidia.com/en-us/data-center/dgx-platform/

[6] NVIDIA. NVIDIA DGX Cloud. https://www.nvidia.com/en-us/data-center/dgx-cloud/

[7] Arizton / OpenPR. (2025, October). Malaysia Data Center Market Surges Past USD 11.4 Billion as Johor Emerges as Southeast Asia’s New Hyperscale Power Hub. https://www.openpr.com/news/4450374/malaysia-data-center-market-surges-past-usd-11-4-billion-as-johor; Asia Society Policy Institute. Malaysia’s Gamble: Turning Data Centres Into Industrial Power. https://asiasociety.org/policy-institute/malaysias-gamble-turning-data-centres-industrial-power

[8] Digital in Asia. (2026, April 8). Southeast Asia Data Centre Boom 2026. https://digitalinasia.com/2026/04/08/southeast-asia-ai-data-centre-boom/; Data Center Frontier. Thailand and Indonesia Look to Become Data Center Hubs For APAC. https://www.datacenterfrontier.com/site-selection/article/55275492/thailand-and-indonesia-look-to-become-data-center-hubs-for-apac

[9] Insignia Business Review. (2025, September 25). Driving conversational AI adoption from China to Singapore then the world with WIZ.AI Senior Director of AI Strategy and Partnerships Robin Li. https://review.insignia.vc/2025/09/25/wiz-ai-robin-li/

[10] Insignia Business Review. (2025, April 15). In the Age of AI, Moats Matter More Than Ever: Why Defensibility is Your Startup’s Most Valuable Asset. https://review.insignia.vc/2025/04/15/moats-ai/

[11] Insignia Business Review. (2026, January 14). Why Southeast Asia is the Ideal Launchpad for Applied AI. https://review.insignia.vc/2026/01/14/why-southeast-asia-is-the-ideal-launchpad-for-applied-ai/

[12] Insignia Business Review. (2026, April 9). Think Like a VC #2: The Evolved VC Playbook for Southeast Asia. https://review.insignia.vc/2026/04/09/southeast-asia-vc/

[13] Insignia Business Review. (2025, December 23). fileAI Founders CEO Christian Schneider and COO Clare Leighton on Building a Global AI-Powered Automation Engine. https://review.insignia.vc/2025/12/23/fileai-nyse/

[14] Insignia Business Review. (2026, February 24). Diaflow secures seed funding from Insignia Ventures Partners to accelerate AI adoption in business workspaces. https://review.insignia.vc/2026/02/24/diaflow-seed/

[15] Insignia Business Review. (2026, March 5). Why AI native companies can’t chase features and what they should focus on instead with Diaflow CEO and co-founder Jonathan Pham. https://review.insignia.vc/2026/03/05/diaflow-jonathan-pham/